Darkness

It’s pitch-black outside. The air is cold and wet, yet it carries a lingering sweet smell. Sporadic beams of light dance in the night, casting an eerie glow on the landscape. Giggles, whispers, and even the occasional scream carry through the streets, a reminder that others are out and about.

Through the eyes of one of these figures, a house is seen. The figure changes course and heads in the direction of the house. The house is unlit and looks unoccupied. In one hand the figure holds a large sack; the other yields a blunt sword.

As the figure makes his way up the porch and to the door, the hand that is holding the sword points forward. The hand is not a human’s hand. It’s about twice as big as a man’s hand. Coarse, dark fur covers its skin, while jagged claws extend from the aged fingers.

The creature now stands directly in front of the door, its purpose clear. It only wants one thing and that thing remains inside the house. With a blast of energy the hand with the sword raises, lunges, and slams into the house.

A chime echoes. The front door opens. The man who opens the door smiles happily while looking down, hardly frightened by the four-foot tall, hairy monster screaming “Trick or treat!”

That Sounds Like a Great Idea

Take a moment to catch your breath and slow your heartbeat. Despite my enjoyment of Halloween, it’s not my focus for the remainder of this chapter. However, the doorbell that so frequently sounds during that annual holiday is.

The doorbell is an outstanding example of an effective interactive control. If a 10-year-old dressed as a monster with oversize latex hands can use it effortlessly in the dark, and anyone listening in the house (even the family dog) instantly understands what it means, it must work well!

Let’s examine why the doorbell is an effective control, using Donald Norman’s Interaction Model.

Make it Visible

Any control needs to be visible when an action or state change requires its presence. The doorbell is an example of an “always present” control. There aren’t that many components on the door anyway (doorknob, lock, windows and peepholes, maybe a knocker), so the doorbell is easy to locate. In many cases the doorbell is illuminated, making it visible and enticing when lighting conditions are poor.

Cultural norms and prior experiences have developed a mental model in which we expect the doorbell to be placed in a specific location—within sight, within easy reach, and to the side of the door. So our scary monster was quickly able to detect the location of the doorbell from even his relatively more limited prior knowledge, as well as see it in the dark.

Having an object visible doesn’t have to mean it can be seen. It can also mean the object is detected. Consider someone who is visually impaired. She still has the prior knowledge that the doorbell is located on the side of the door, at eye level and within reach. Its shape is uniquely tactile, making it easy to detect when fingers or hands make contact with it. Most doorbells are in notably raised housing, and although it’s not clear if this is an artifact of engineering, it serves to make the doorbell easy to find and identify by touch as well.

In mobile devices this is a very important principle. Many times we don’t have the opportunity to always look at the display for a button on the screen, but we can feel the different hardware keys. Consider people playing video games. Their attention is on the TV, not the device controller, yet they are easily able to push the correct buttons during game play.

Mapping

The term mapping within interactive contexts describes the relationship established be- tween two objects, and implies how well users understand their connection. This relates to the mental model a user builds of the control and its expected outcome. When we see the doorbell we have learned that when we push it, it will sound a chime that can be heard from within the house or building to notify the person inside that someone is waiting outside by the door.

Our 10-year-old monster mapped that pushing and sounding of the doorbell will both notify the people inside that a trick-or-treater is there and that he will receive a handful of free candy.

So mapping relates heavily on context. If the boy pushes the doorbell on a night other than Halloween, the outcome will differ. The sounding chime will remain, which indicates a person is waiting outside, but the likelihood of someone being there to answer and, what’s more, to hand out candy, is much less great.

On a mobile device, controls that resemble our cultural standards are going to be well understood. For example, let’s relate volume with a control. In the context of a phone call, pushing the volume control is expected to either increase or decrease the volume level. However, if the context changes, for example, on the Idle screen, that button may provide additional functionality, bringing up a modal pop up to control screen brightness and volume levels. Just like call volume, pushing up will still perform an increase and pushing down will perform a decrease in those levels.

But you must adhere to common mapping principles related to your user’s understanding of control display compatibility. On the iPhone, in order to take a screenshot you must hold the power button at the same time as the home button. This type of interaction is very confusing, is impossible to discover unless you read the manual (or otherwise look it up, or are told), and is hard to remember as the controls have no relation to the functional result. On-screen and kinesthetic gestures can be problematic too if the action isn’t related to the type of reaction expected. Use natural body movements that mimic the way the device should act. Do not use arbitrary or uncommon gestures.

Affordances

Affordances describe that an object’s function can be understood based on its properties. The doorbell extends outward, can be round or rectangular, and has a target touch size large enough for a finger to push. Its characteristics afford contact and pushing.

On mobile devices, physical keys that extend outward or are recessed inward afford pushing, rotating, or sliding. Keys that are grouped in proximity afford a common relationship, many times a polar functionality. For example, four-way keys afford directional scrolling while the center key affords selection.

Yes, affordances are just like other understandings of interactive controls and are learned. Very few of these are truly intuitive (meaning innately understandable) despite the common use of the term, and may vary by the user’s background, including regional or cul- tural differences.

Provide Constraints

Restrictions on behavior can be both natural and cultural. They can be both positive and negative, and they can prevent undesired results such as loss of data, or unnecessary state changes.

Our doorbell could only be pushed and not pulled (in fact, a style of older doorbell did require pulling a significant distance). The distance which the button could be offset is restricted by the mechanics of the device; it cannot be moved in any direction except along the z-axis.

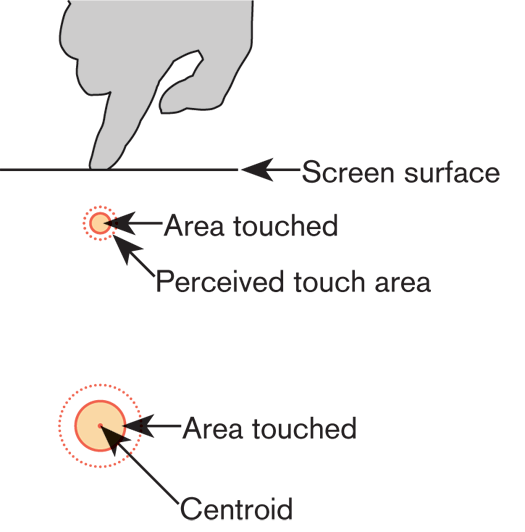

Despite the small surface and touch size, the button can still be pressed down with a finger, an entire hand, or any object, even one much larger than the button’s surface. In this context, that lack of constraint was beneficial. A user doesn’t have to be entirely accurate using the tip of the finger to access the device (see Figure 10-1). Our trick-or-treater, with huge latex hands holding a sword, was still able to push the button down, allowing for a quick interaction. More commonly, the doorbell can be pushed by someone wearing mittens or by an elbow if the person is carrying groceries.

On mobile devices, however, it’s often necessary to define constraints on general interactive controls. Some constraints that should be considered involve:

Contact pressure' The ability to interact with a button can heavily depend on its physical size and its proximity to other buttons or hardware elements. With on-screen targets, you must carefully consider this when designing UIs that have these interactive controls. Small touch targets must have larger touch areas, and must not be too close to a raised bezel. Consider as well the use of other sensors, and how Kinesthetic Gestures can change the effective control size, such as for the card reader in Figure 10-2. Refer to the section "General Touch Interaction Guidelines" in Appendix D.

Use feedback properly. Unlike the doorbell’s immediate indicator of an action, an elevator call or floor button (both work the same, as they should) remains illuminated as a request- for-service, until it has been fulfilled. As an antipattern, crosswalk request buttons generally provide no feedback, or beep and illuminate as pressed. There is no assurance that the request was received, or is properly queued. On mobile devices, when we click, select an object, or move the device we expect an im- mediate response. With general interactive controls, feedback is experienced in multiple ways. A single object or entire image may change shape, size, orientation, color, or position. Devices that use accelerometers provide immediate feedback showing page flips, rotations, expansion, and sliding.

A growing number of devices today are using gestural interactive controls as the primary input method. We can expect smartphones, tablets, and game systems to have some level of these types of controls. Gestural interfaces have a unique set of guidelines that other interactive controls need not follow: Dan Saffer (Saffer 2009) points out five reasons to use interactive gestures: Patterns for General Interactive Controls 319

The patterns in this chapter describe how you can use general interactive controls to initiate various forms of interaction on mobile devices:

Please do not change content above this line, as it's a perfect match with the printed book. Everything else you want to add goes down here.

If you want to add examples (and we occasionally do also) add them here.

Just like this. If, for example, you want to argue about the differences between, say, Tidwell's Vertical Stack, and our general concept of the List, then add a section to discuss. If we're successful, we'll get to make a new edition and will take all these discussions into account.

Time of contact

Axis of control

Size of control Use Feedback

Feedback describes the immediate perceived result of an interaction. It confirms that action took place and presents us with more information. Without feedback, the user may believe the action never took place, leading to frustration and repetitive input attempts. The pushed doorbell provides immediate audio feedback that can be heard from outside as well as inside the house. Illuminated doorbells also darken while being pressed, providing additional feedback to the user as well as confirmation in loud environments, for more soundproof houses, or for users with auditory deficits. Gestural Interactive Controls

Less cumbersome or visible hardware

More flexibility

More fun Patterns for General Interactive Controls

Press-and-Hold

Focus & Cursors

Other Hardware Keys

Accesskeys

Dialer

On-screen Gestures

Kinesthetic Gestures

Remote Gestures Discuss & Add

Examples

Make a new section